ML Infrastructure and its best practices support training, testing, and deploying of models. Moreover, these techniques help achieve long-term objectives for businesses.

Further, it is important to know that the best practices in ML infrastructure manage, reduce, and prevent problems within the process. ML infrastructure is a pivotal component that builds machine learning models. Hence, models may differ in various projects. Therefore, with ML infrastructure best practices help implement resources as per requirement and functions.

Although developing an ML infrastructure can be a complex process, the best practices help by working towards processes. Hence, in this article, we will learn more about the concept of ML infrastructure and its best practices for businesses to implement.

According to Gartner, “AI will remain one of the top workloads driving infrastructure decisions through 2023. Accelerating AI pilots into production requires specific infrastructure resources that can grow and evolve alongside technology. AI models will need to be periodically refined by the enterprise IT team to ensure high success rates. This might include standardizing data pipelines or integrating machine learning (ML) models with streaming data sources to deliver real-time predictions.”

ML Infrastructure and its best practices in businesses

What is a Machine Learning Infrastructure?

An ML infrastructure combines components, processes, and solutions for machine learning models. It also develops, manages, and trains these ML models. It is also known as an AI infrastructure or a pivotal component of MLOps. As a result, it provides solutions to manage and monitor machine learning and its workflows.

Further, ML development is a complex, expensive and tedious task. There are also several algorithms and frameworks that develop various models. Moreover, these models require robust training to process data and make predictions. These models also need to meet business requirements and prepare for any future changes or developments.

As a result, businesses leverage an ML infrastructure to mitigate these challenges. Further, it also helps businesses develop high-performing, cost-effective, and agile processes. Hence, a Machine Learning infrastructure requires the following components to scale business processes:

- Containers

- Orchestration Tools

- Hybrid and Multi-cloud Environments

- Open and Agile Architecture

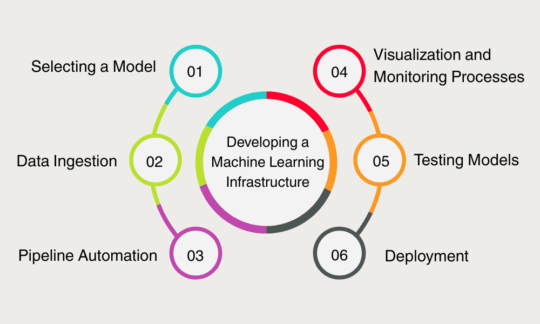

Developing a Machine Learning Infrastructure

In order to understand ML infrastructure in detail, it is also important to know more about its components and various aspects.

- Selecting a Model:

Firstly, it is important to select an ML model that supports and develops the business and its processes. It helps identify the data that is ingested, the tools and solutions required, and the various interactions between components.

- Data Ingestion:

Data ingestion is also a pivotal component in an ML infrastructure. It monitors the process of data collection for training models and deploying applications.

It also helps integrate the infrastructure to various data sources, process pipelines, and storage. Hence, it collects and processes data from various sources to aggregate and store them within the infrastructure. As a result, developers and businesses can leverage real-time data.

- Pipeline Automation:

ML Pipelines help process data to train models, monitor processes, and deploy functions. It helps businesses to focus on more complex tasks to improve efficiency and standardize various processes.

- Visualization and Monitoring Processes:

ML infrastructure requires visualization and monitoring processes to learn about workflows, accuracy in model training, and comprehend results. Further, Visualization helps interpret and explain ML workflows and systems. Whereas, Monitoring is a process that manages and observes various workflows at all times.

Hence, while integrating visualization and monitoring in the infrastructure, businesses must utilize tools to ingest data regularly.

- Testing Models:

While testing ML models, here are a few important steps that need to be followed:

-

- Gathering and analyzing quantitative and qualitative data.

- Running and executing multiple training models in similar environments.

- Detecting errors in various processes.

Hence, ML model testing requires data analysis, monitoring, and visualization tools.

- Deployment

Finally, the last stage in the ML infrastructure is deployment. Moreover, it provides development teams with a model to integrate solutions and applications.

Understanding the relation between Machine Learning Infrastructure and MLOps

MLOps is a process that collaborates data science, data, and Machine Learning engineering within ITOps. Moreover, it helps manage the process lifecycle and executes AI/ML processes in the Machine Learning infrastructure. Moreover, MLOps supports the ML infrastructure to deliver agile and innovative solutions. It also helps develop a robust ML infrastructure to interpret ML models and execute AI projects.

MLOps integrates tasks like deployment, monitoring, and management within the ML infrastructure using AI and ML solutions for best practices.

Understanding the Requirement for ML Infrastructure

- Versioning and Management:

Model versioning is a similar term to code versioning. That is to say, model versioning is a complex process of developing new versions in models within the ML infrastructure. Moreover, versioning requires solutions to monitor codes, models, data, functions, and metrics. Similarly, model management is an entire process in itself. Hence, with a robust ML infrastructure, model versioning and management processes become a pivotal part. Therefore, as the businesses mature, their development, deployment, and maintenance of ML models mature. As a result, an ML infrastructure considers these requirements and incorporates the solutions for the same.

- Explainability and Interpretability:

ML models often require high levels of governance for seamless deployment of processes. Therefore, explainability and interpretability become important components in learning more about the processes.

Moreover, Interpretability refers to predicting a certain outcome or result by understanding the input. Further, Explainability is a term that understands the way models generate certain solutions.

Hence, within an ML infrastructure, the models require both explainability and interpretability in order to provide outcomes that are accurate.

- Deployment, Management, and Service:

An ML Infrastructure also helps deploy, manage, and provide solutions for various models and processes. Therefore, it helps transition, analyze, and evaluate older models into newer models. Although there are no processes for continuous learning within ML models. Hence, the best practices in ML infrastructure provide CI-CD abilities to develop and generate ML models.

Use Cases of Machine Learning Models

- Firstly, an ML infrastructure is used in Computer Vision to classify images, detect objects, segment images, and restore images.

- It also helps in speech and NLP, speech recognition, and translating languages. Moreover, it helps process and executes text-to-speech synthesis.

- Further, it recognizes patterns and identifies anomalies. Therefore, it analyzes, filters, and monitors content for content personalization and for products for market segmentation.

Here are the Best Practices for ML Infrastructure

- Selecting the Location:

Firstly, businesses must identify and select the location for the machine learning Infrastructure. Moreover, the location could be on-premise or on the cloud depending on the requirement and projects. Further, selecting a cloud-based infrastructure saves time, cost, and effort. Moreover, there are various providers like Microsoft Azure, AWS, GCP, etc, who support businesses. They also provide solutions according to their requirements and objectives.

However, an on-premise machine learning infrastructure is a single investment that enables businesses to execute projects at no additional costs. For example, Nvidia workstations and Lambda Labs are pre-built servers.

- Calculating and Predicting Requirements:

Most importantly, businesses must first identify and predict future requirements. Further, the hardware in developing an ML infrastructure impacts performances and costs. For instance, GPUs execute deep learning models and CPUs execute traditional ML models. Moreover, these models require large sets of data within the infrastructure.

Further, the efficiency of GPUs and CPUs utilize algorithms and functions that impact operations and cloud usage. Hence, these components require extra attention as they affect the deployment of processes.

Therefore, one of the best practices while developing an ML infrastructure is balancing the underpowering and overpowering components.

- Network and Storage Environments:

One of the best practices in machine learning infrastructure is to select network and storage environments. Firstly, a network environment helps ensure efficiency in MLOps. It also ensures seamless and reliable communication between the networks and their components. It also helps leverage networking abilities to process and store data.

Further, an ML infrastructure requires a robust storage environment to store large data sets collected from various sources. Moreover, it helps ML infrastructures in data ingestion, prevent delays, and execute complex training models.

- Segregating Model Training and Model Serving Solutions:

Businesses must also practice separating model training and model serving solutions. Moreover, the segregation of a training model from a serving model can enable accurate testing of infrastructure. Hence, it is one of the best practices as it makes the ML infrastructure independent and more self-sufficient.

- Securing Data and Processes:

It is pivotal to understand that training and executing models require large volumes of data. Further, the data collected and used in these processes are confidential and valuable. Moreover, any breach or manipulation of the data could lead to serious consequences. Therefore, one of the best practices in ML infrastructure is to develop, monitor, encrypt, and authorize data. Moreover, it also helps businesses understand and adhere to compliances and their various requirements.

Conclusion:

In conclusion, developing Machine Learning Infrastructure is a complex process. Therefore, the best practices in ML Infrastructure execute efficient machine learning functions. Although Machine Learning infrastructure is still in its infancy, it enables various capabilities within the workflow. Hence, with the best practices in ML Infrastructure, businesses can leverage AI-based solutions in their operations.

Recommended For You:

Quantum Machine Learning: Redefining The Boundaries of Artificial Intelligence

What is an Epoch in Machine Learning? What is the epoch used for?